Week 14: Connections

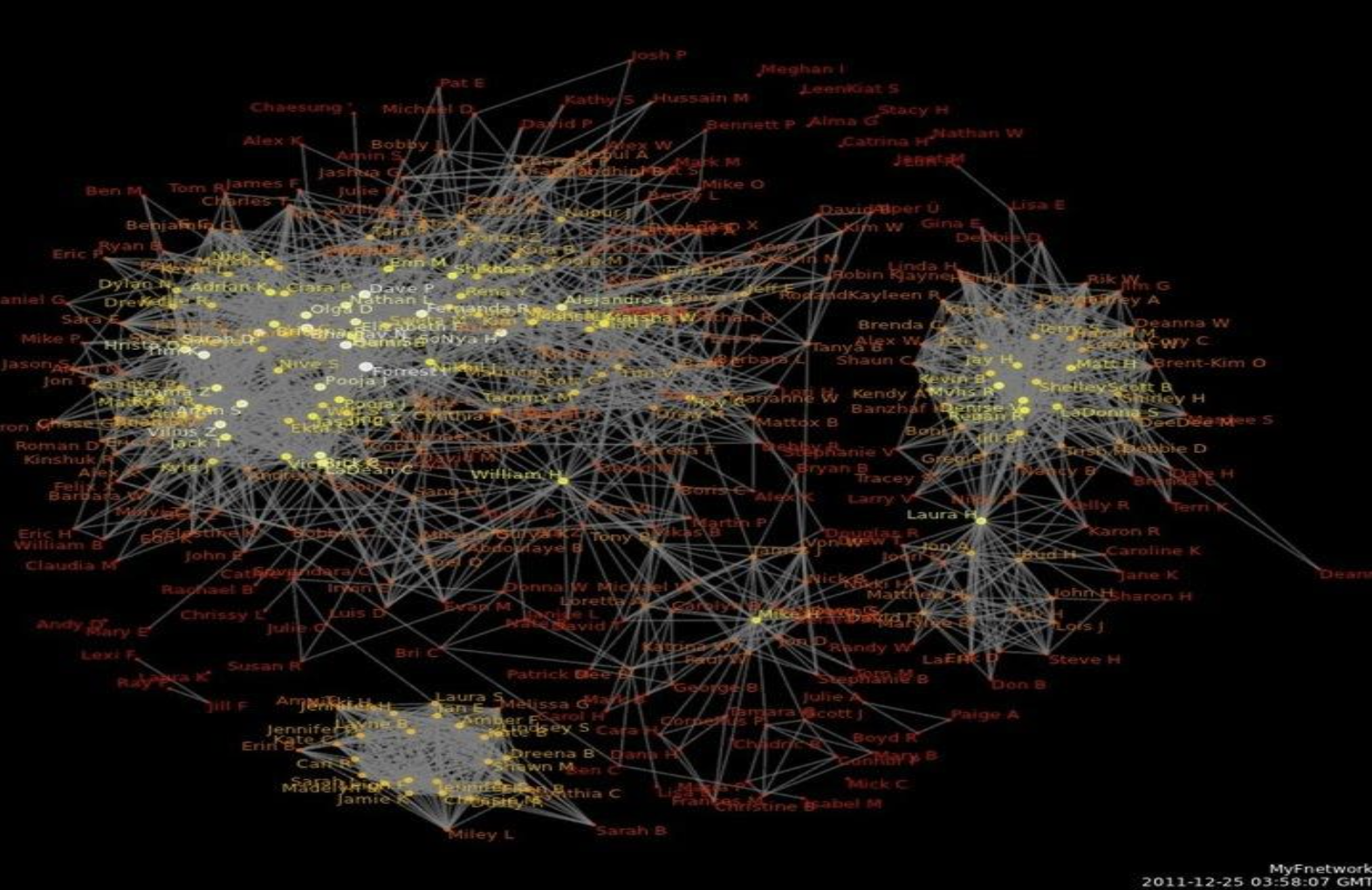

Two very different classes this week, held together by a single theme: connections. On Tuesday we built a social network from a novel — a graph where characters are vertices and shared paragraphs are edges. On Thursday we zoomed out and asked the bigger question: why does following your curiosity across completely unrelated domains keep paying off? Both days were about discovering that things you thought had nothing to do with each other turn out to be deeply, strongly connected.

Your (Completed!) Toolkit

- Representation — how we encode meaning

- Collections — how we group things

- Control flow — how we make decisions and repeat

- Functions — how we name and reuse logic

- Abstraction — how we hide complexity

- Efficiency — how we measure cost

This week: Social Networks + NLP pipelines + Acquired Diversity — the whole term in one week!

Thursday: Strongly Connected Components

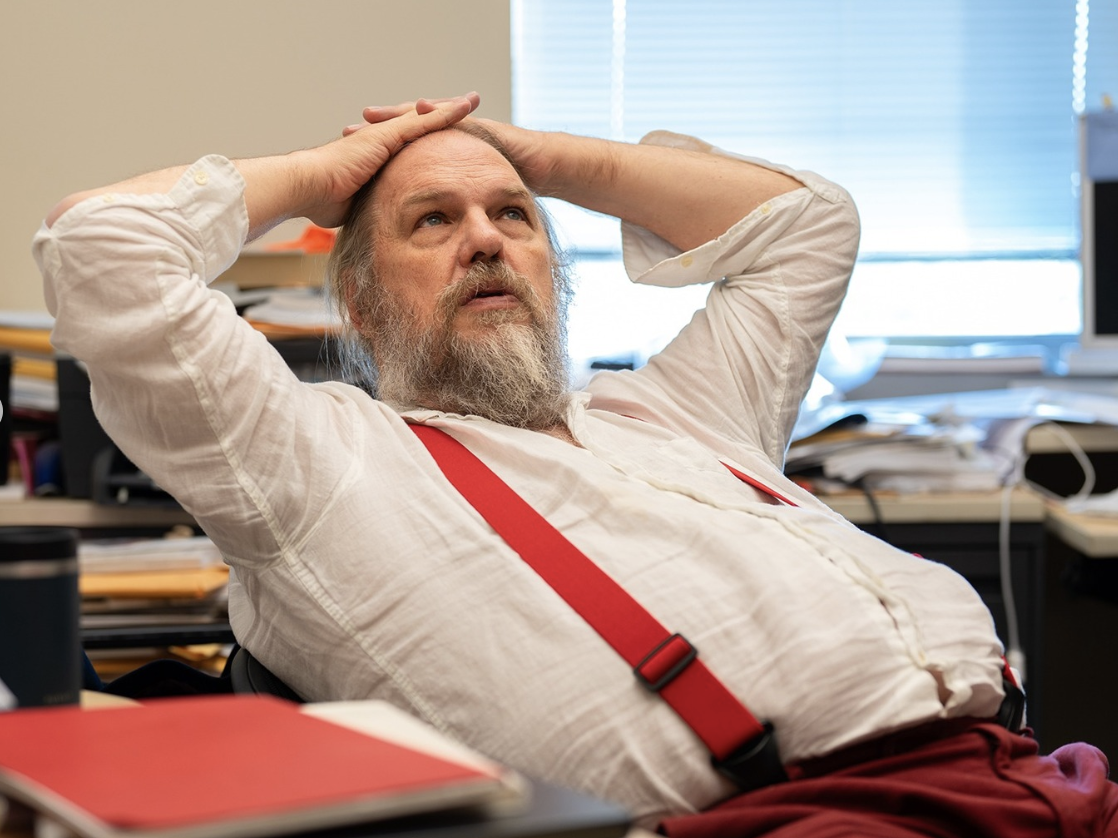

A Story About Following Curiosity

Thursday was the last lecture of the term, and it was a little different. Instead of new technical content, it was a story about how following your interests across seemingly unrelated domains keeps paying off in unexpected ways. The technical concept at the heart of it — strong connectivity — turned out to be a perfect metaphor for what we were describing.

It Started With National Parks

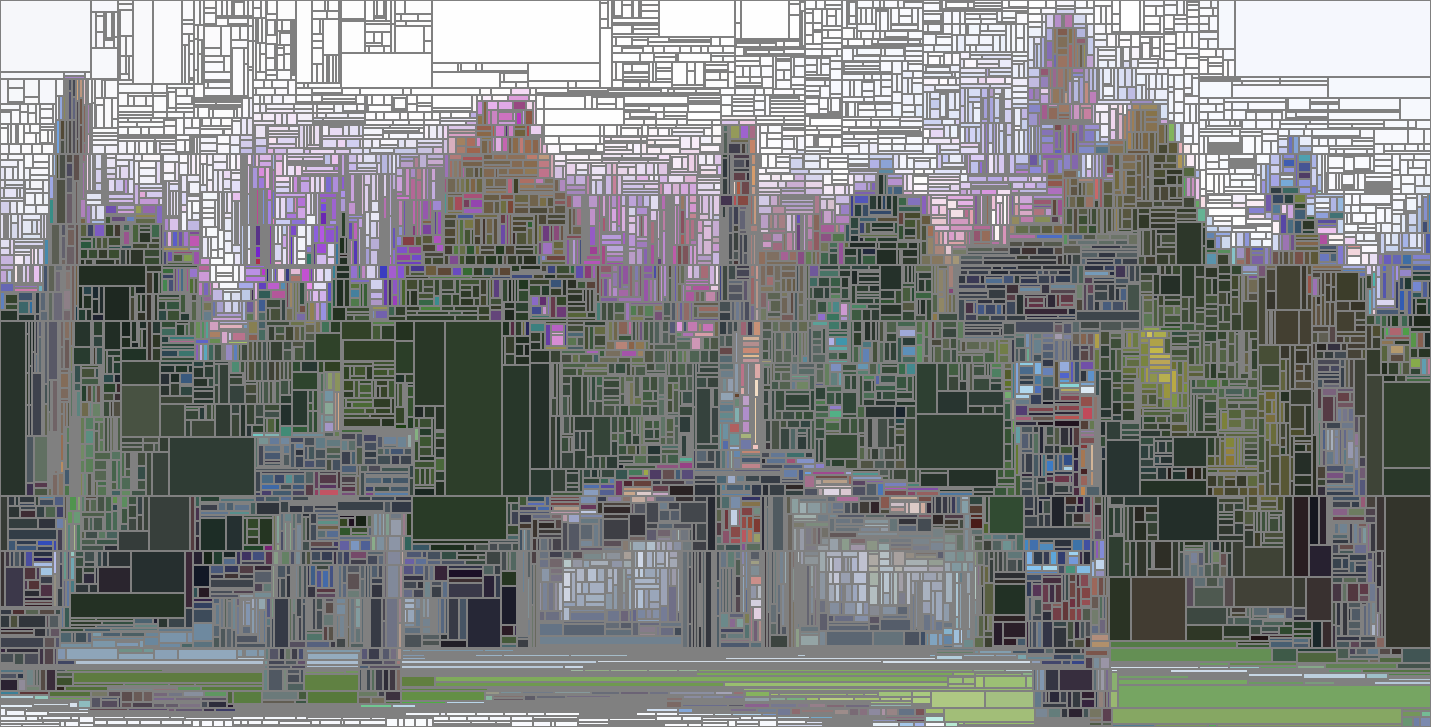

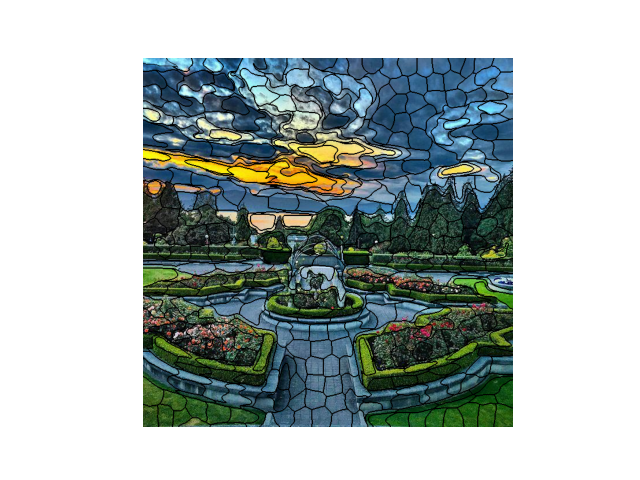

The starting point: photographs from national parks, a desire to turn them into something that looks like stained glass — algorithmically. Not painting by hand. Coding it.

Voronoi Wasn’t Quite Right

We already know Voronoi diagrams. The idea was to use Voronoi regions as the “panes” of the stained glass. Here’s how that went:

The problem: Voronoi cells are seeded randomly, so they cut right across color boundaries rather than following them. What we really want is regions that are equal sized, continuous, compact, and honor image boundaries.

Pointillism as a Stepping Stone

Voronoi does work beautifully as pointillism — using the centroid color of each region as a single dot. But for stained glass, we need the regions to hug the color boundaries. Time to ask for help.

Phone a Friend → Superpixels

“What you need are superpixels!”

Superpixels are an image-processing technique: groups of pixels clustered together into equal-sized, continuous, compact regions that honor color boundaries. The SLIC algorithm (Simple Linear Iterative Clustering) runs k-means in 5D space — (x, y, r, g, b) — so it naturally groups spatially close, similar-colored pixels together.

Equal sized. Continuous. Compact. Honoring boundaries. Exactly what stained glass needs. And Python’s skimage library has SLIC built right in.

Then Came the Second Question

“How will you know if you’ve done a good job?”

This question opened a completely unexpected rabbit hole. What does it mean for a partition of an image into regions to be good? This led directly to… gerrymandering.

The Gerrymandering Detour

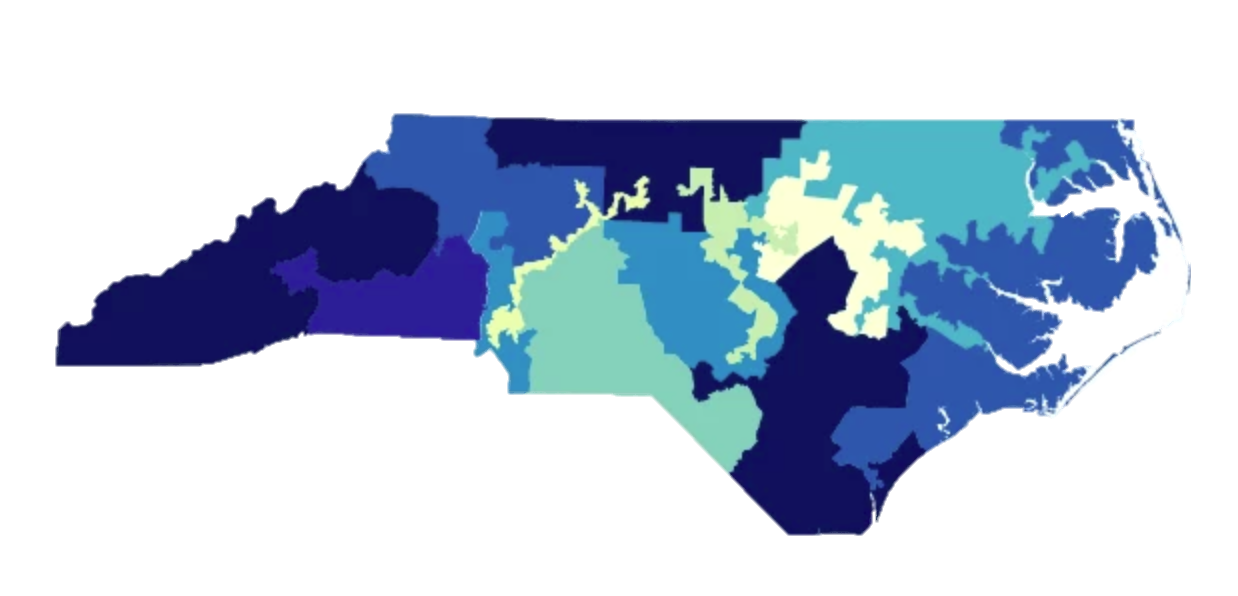

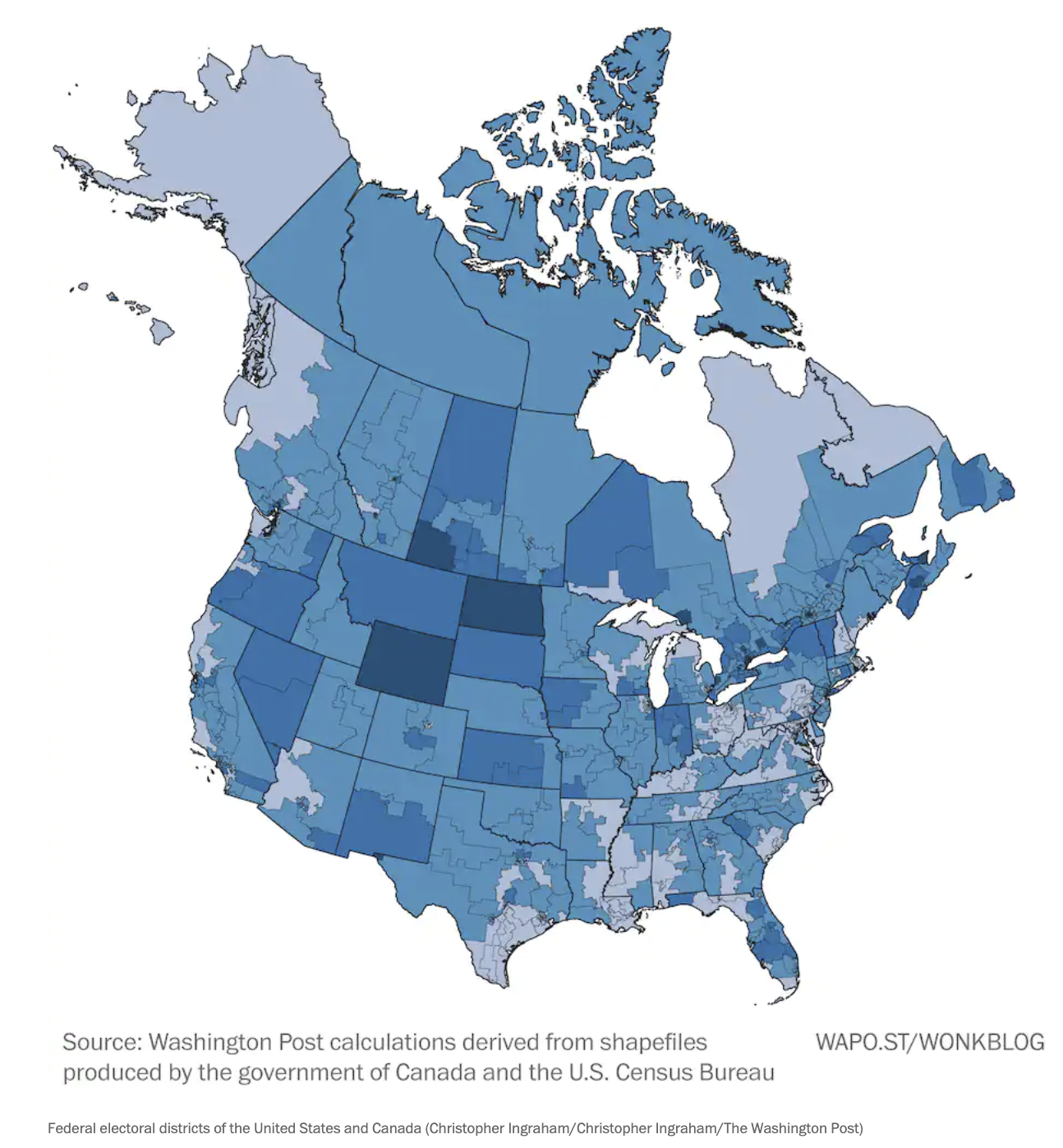

Gerrymandering is the drawing of political district boundaries to give one party an unfair advantage. The connection to superpixels: both problems are about dividing a 2D space into regions, and in both cases you want regions that are equal sized, continuous, and compact.

Gerrymandered ✗ — districts are not compact

Compact ✓ — districts follow natural geography

Canada solved this problem in 1964 by requiring each province to form an independent panel to draw boundaries — no politicians involved. The result: much more compact, geographically sensible districts.

In 1964, Canada passed a law requiring each province to form an independent panel to draw district boundaries.

The lighter, larger regions are a direct result: districts that reflect geography rather than political strategy.

Which is Fair? The Three Scenarios

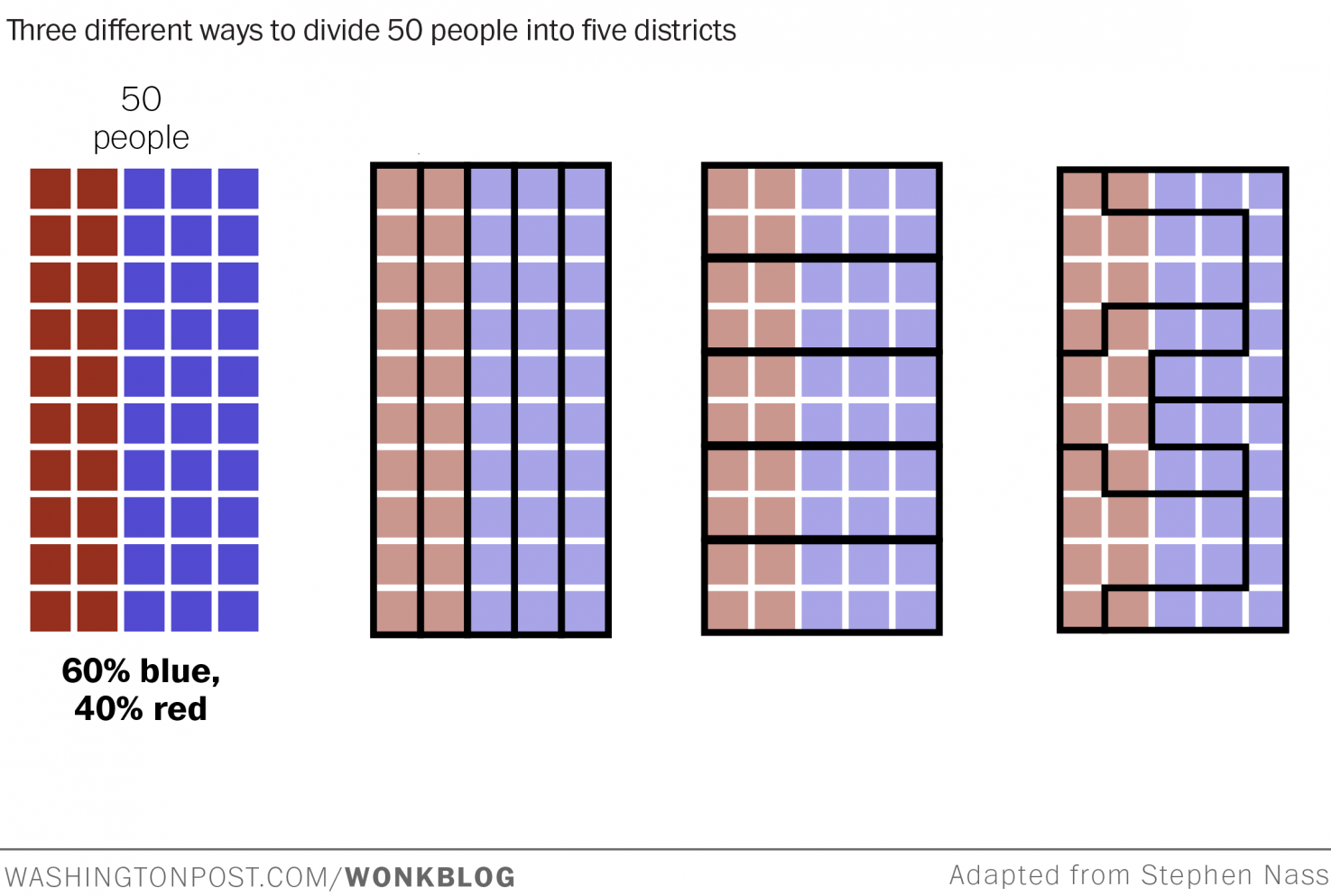

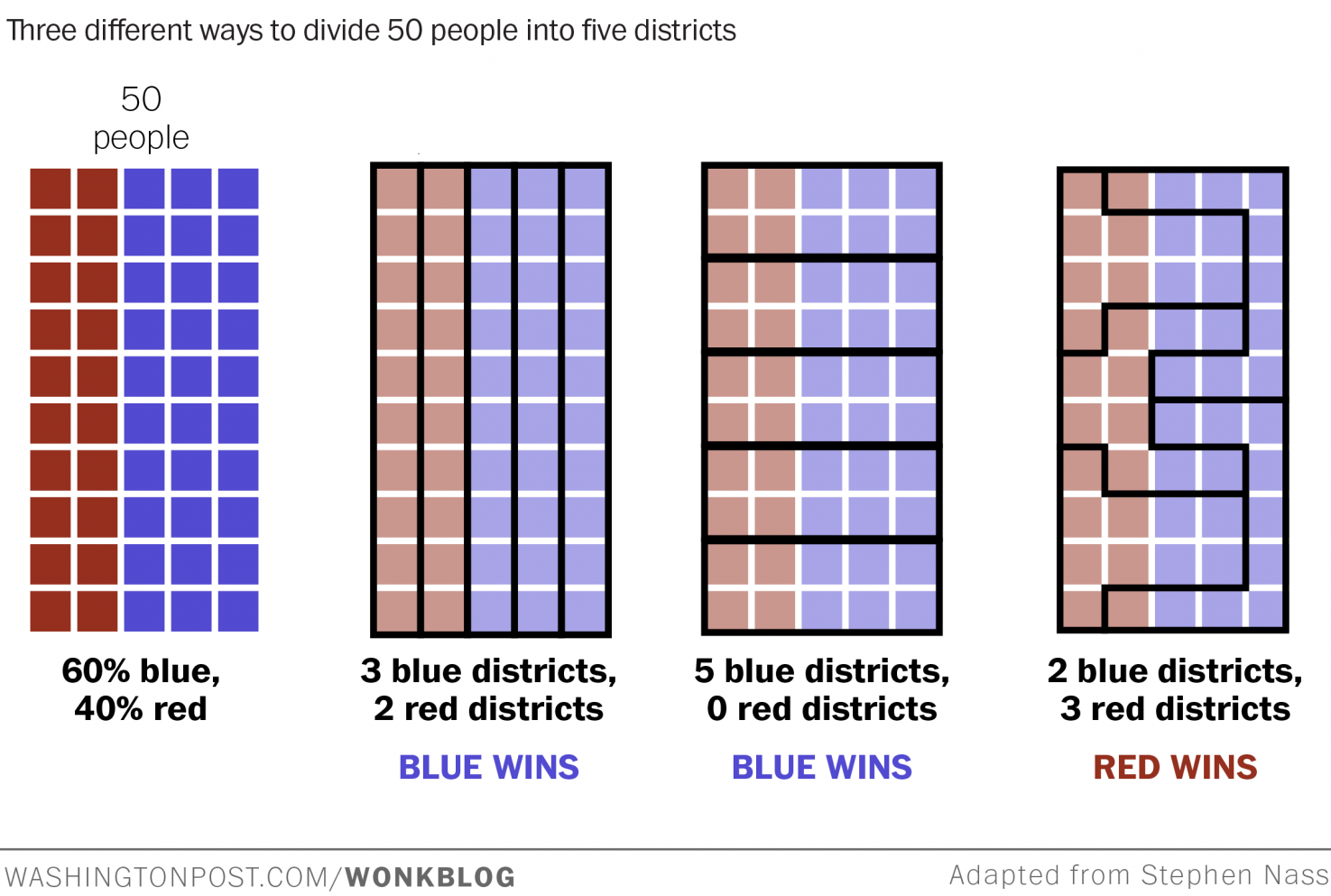

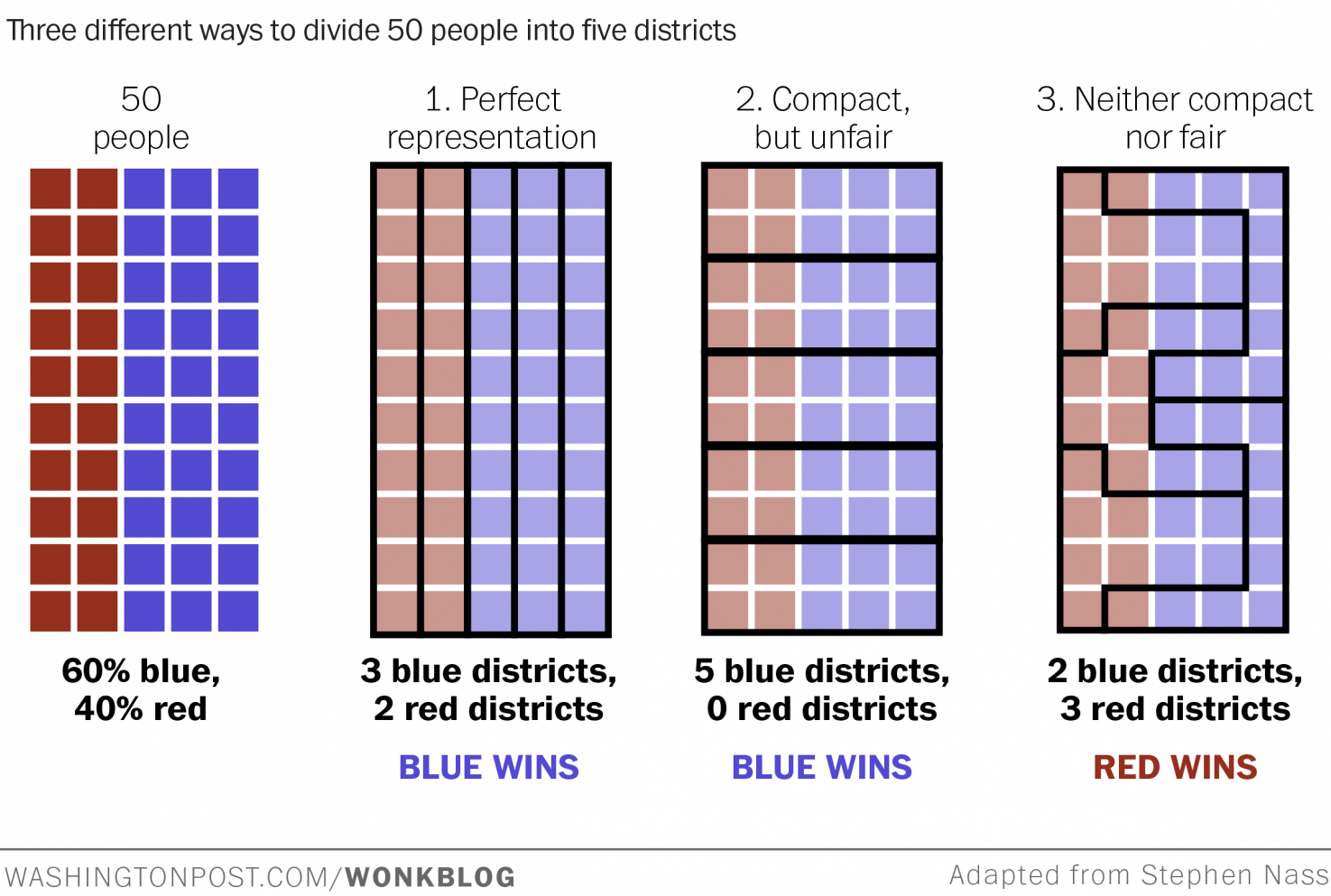

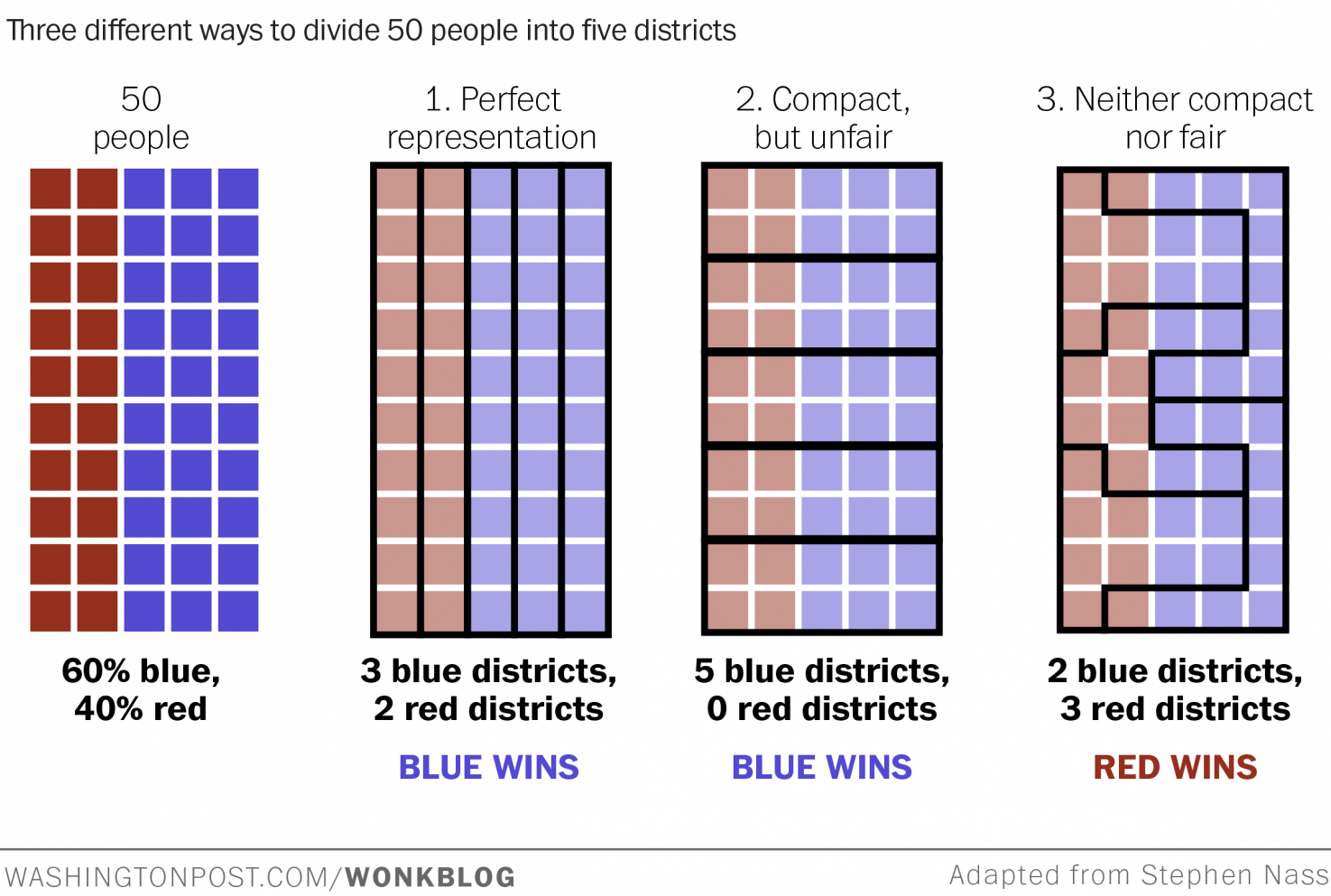

Take 50 people, 60% blue and 40% red, and divide them into 5 districts. Three ways to draw the lines produce very different outcomes:

The same 50 people, with the same party affiliations, produce completely different election results depending on where the lines are drawn. This is gerrymandering: using geometry as a political weapon.

The Efficiency Gap: A Metric for Fairness

Can we quantify how gerrymandered a map is? Yes — with wasted votes:

- Any vote above 50% for the district winner is wasted (the winner didn’t need them)

- Any vote for the loser is wasted (they didn’t count toward anything)

- For each district: count votes above 50% for the winner + all votes for the loser

- Sum wasted votes by party across all districts

- Efficiency gap = |Party A wasted − Party B wasted| / total votes cast

A perfectly fair map has gap ≈ 0. A gerrymandered map has a large gap — one party systematically wastes far more votes than the other.

We want to minimize the gap!

This concept was formalized in a peer-reviewed paper and has been cited in actual court cases challenging gerrymandered maps:

The Rabbit Hole Closes… and Opens Again

Here’s where it gets delightful. The efficiency gap was developed to measure fairness in political districting. But the idea — counting “misclassified” items and penalizing the algorithm for it — applies directly to pixel clustering.

Wasted votes = pixels assigned to the wrong superpixel region.

The efficiency gap formula, slightly reinterpreted, becomes a cost function for evaluating how well a superpixel algorithm honored the color boundaries of the original image. The political science problem illuminated the computer vision problem, and vice versa.

The rabbit hole questions:

- What would Nate Silver do with the gap?

- What happens in the limit?

- What happens in a multi-party system?

- Is there a maximum-likelihood estimator for the gap?

- Can I apply this within a clustering algorithm on pixels?

Strong Connectivity

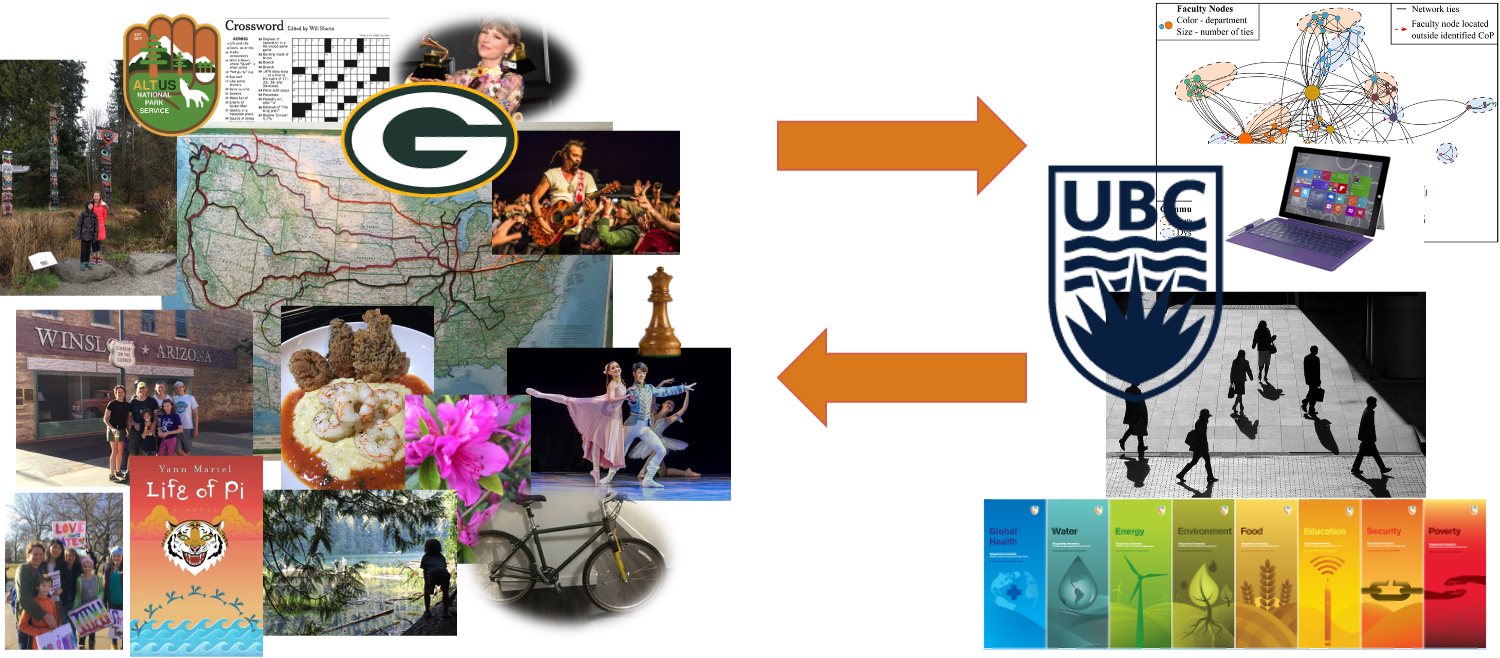

→

←

A Tiffany stained glass tree and the Canadian Charter of Rights and Freedoms. Two things that have nothing to do with each other — except that the quest for one led directly to a deeper understanding of the other.

The arrows go both ways. The stained glass ambition raised the question of how to evaluate clustering quality, which led to gerrymandering, which led to the efficiency gap, which fed back as a new tool for the original image segmentation problem.

That bidirectional influence — where learning flows in both directions — is what graph theorists call strong connectivity. Two vertices are strongly connected if you can get from each one to the other. In the graph of ideas, stained glass and electoral fairness are now in the same strongly connected component.

A Diversity Diversion

- Speed: Cognitively diverse teams solve problems up to 3× faster and consider 48% more solutions (HBR/Scientific American)

- Accuracy: Groups with diverse members are more likely to correct errors and avoid groupthink (NeuroLeadership Institute)

- Innovation: Cognitive diversity can improve team innovation by 20% (Journal of Applied Psychology)

Most of this research focuses on inherent diversity — characteristics we’re born with or into. But this talk was about something different.

Acquired diversity is the breadth of your interests, experiences, and connections. Travel. Books and games. Music. Art. Food. Coding for fun. Building something. Planting a garden.

The connections between stained glass, Voronoi diagrams, image clustering, and electoral fairness didn’t come from a plan. They came from following curiosity — from having enough acquired diversity that when a new problem appeared, the old one lit up in recognition.

“We cannot predict which connections will be important — so build as many as you can. Do things for beauty, for fun, or because you are afraid of them.”

Your Joyful Interests Are Not a Distraction

Here is something worth holding onto as you move through your career:

The things you love for their own sake — the chess, the crosswords, the cycling, the music, the Taylor Swift deep dives, whatever it is for you — are not in competition with your professional skills. They are the source of them.

The story of stained glass → superpixels → gerrymandering → efficiency gap → better clustering is not unusual. It is the normal way that breakthroughs happen. Someone who loves two things finds that one explains the other. Someone bored at a concert has an algorithm idea. Someone gardening realizes the solution to a data structure problem they’d been stuck on for weeks.

You are not wasting time when you pursue things that delight you. You are building the cross-domain vocabulary that will make you unexpectedly, disproportionately good at your required tasks. The chess player sees search trees differently. The musician hears rhythm in loops. The crossword solver is comfortable with constraint satisfaction. The cyclist knows what it feels like to optimize over a long, bumpy route.

Expertise in one domain gives you metaphors, intuitions, and tools that transfer to other domains in ways you cannot predict in advance. The only way to have those cross-domain tools when you need them is to acquire them before you need them — which means following your interests now, without justification, and trusting that the connections will emerge.

Your curiosity is not frivolous. It is your competitive advantage.

Do things for beauty, for fun, or because you are afraid of them. We cannot predict which connections will be important — so build as many as you can.

The Full Course Arc

| Week | Topic |

|---|---|

| 1 | Algorithms and efficiency |

| 2 | Representation, lists, strings |

| 3 | Classes, friendship graphs |

| 4 | Bracelets, loops, recursion |

| 5 | Pandas, data analysis |

| 6 | Dictionaries |

| 7 | Reading week |

| Week | Topic |

|---|---|

| 8 | Graphs, BFS, DFS |

| 9 | Voronoi, weighted graphs |

| 10 | Sudoku, constraint solving |

| 11 | Maps, Dijkstra’s algorithm |

| 12 | TSP, permutations |

| 13 | Text as data, NLP, NER |

| 14 | Social networks, SCC |

From counting bracelet patterns to inferring social networks from Victorian novels — it’s been quite a journey. The toolkit you’ve built is real, and it will keep paying off in ways you can’t predict yet.