Programming, problem solving, and algorithms

CPSC 203, 2025 W2

April 2, 2026

Announcements

Text as Data

From Last Time

Resource for our nlp tool: spaCy

Key observation: when a big string of text is run through the processor, many, many attributes are introduced!

Last time we counted word “token”s. Today: POS and NER.

Parts of Speech

Helps us understand the meaning of language.

Parts of Speech

Open class — new words can always be added: Noun, Verb, Adjective, Adverb

Closed class — fixed, finite set: Preposition, Conjunction, Pronoun, Interjection

Nouns subdivide: proper (Emma, Hartfield) vs common (village, house)

→ Proper nouns are often named entities — this is our bridge to NER

POS Tagging is Hard

The word “curling” can be three different things depending on context:

Plug in the curling iron. → adjective (JJ)

I want to learn to play curling. → noun (NN)

The ribbon is curling around the Maypole. → verb (VBG)

spaCy POS: Two Levels

spaCy gives you two levels of detail on every token:

| Attribute | Grain | Example values |

|---|---|---|

token.pos_ |

Coarse (universal) | NOUN, VERB, PROPN, ADJ, ADP |

token.tag_ |

Fine (Penn Treebank) | NN, VBD, NNP, JJ, IN |

For the sentence “Emma lost no time.”:

PROPN: proper noun, a signal that this token might be a named entity

From POS to Named Entities

POS tagging labels every token individually.

But named entities are often multi-word spans: “Mr. Knightley”, “Hartfield”, “Emma Woodhouse”

NER goes farther: it groups tokens into spans and assigns a category:

PERSON— people and charactersLOC/GPE— placesORG— organizations

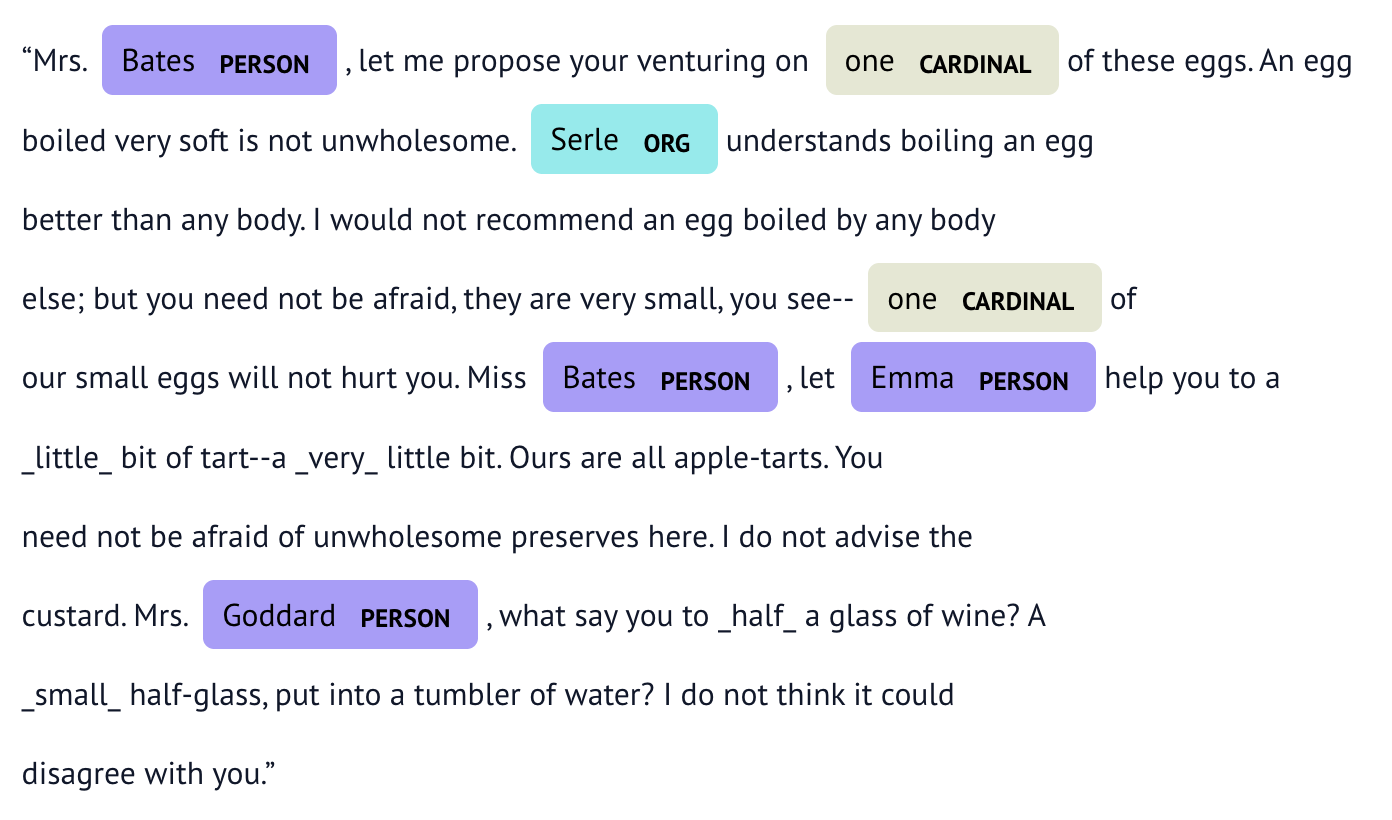

Named Entity Recognition

Essentially: tagging proper nouns

NER used for inferring relationships between entities:

- PERSON lives LOCATION

- LOCATION has ORGANIZATION

NER

- Underline all of the proper nouns (named entities) in this text:

Harriet Smith’s intimacy at Hartfield was soon a settled thing. Quick and decided in her ways, Emma lost no time in inviting, encouraging, and telling her to come very often; and as their acquaintance increased, so did their satisfaction in each other. As a walking companion, Emma had very early foreseen how useful she might find her. In that respect Mrs. Weston’s loss had been important. Her father never went beyond the shrubbery, where two divisions of the ground sufficed him for his long walk, or his short, as the year varied; and since Mrs. Weston’s marriage her exercise had been too much confined. She had ventured once alone to Randalls, but it was not pleasant; and a Harriet Smith, therefore, one whom she could summon at any time to a walk, would be a valuable addition to her privileges. But in every respect, as she saw more of her, she approved her, and was confirmed in all her kind designs.Typical categories of entities are PERSON, LOCATION, ORGANIZATION. Think about how you might discover each of the entities using a program.

IOB Encoding

NER marks which tokens belong to an entity using IOB tags:

| Tag | Meaning | Example |

|---|---|---|

B |

Beginning of an entity | ('Harriet', 'B', 'PERSON') |

I |

Inside (continuing) an entity | ('Smith', 'I', 'PERSON') |

O |

Outside — not part of any entity | ('was', 'O', '') |

So “Harriet Smith was late” becomes:

NER with SpaCy

- The PL activity contains a file called

ner_nb.py. Modify and execute this file to answer the following questions. In each case, sketch an example of the output, and explain it briefly in English.

load a OFK excerpt from

ofk_ch1Short.txtcreate a spaCy doc object with

doc = nlp(textRaw)

NER with SpaCy

If

docis the result of part b, What doessents = list(doc.sents)do?If

sentsis the result of part c, what doessentWords = [[token.text for token in sent] for sent in sents]do?If

sentsis the result of part c, what doessentWordsPOS = [[(token.text, token.pos_) for token in sent] for sent in sents]do?

NER with SpaCy

If

sentsis the result of part c, what doesents_by_sent = [[(ent.text, ent.label_) for ent in sent.ents] for sent in sents]do?If

sentsis the result of part c, what doessentWordsNER = [[(token.text, token.ent_iob_, token.ent_type_) for token in sent] for sent in sents]do?If

docis the result of part b, what doesnames = [ent.text for ent in doc.ents if ent.label_ == "PERSON"]do?